Unified Namespace….The popularity of this topic is growing: Many people seem to understand the concept and its value, though they’re trying to figure out how to implement it in some areas of their manufacturing business.

Today I’ll review the basic concepts, the real value, the challenges, and your options.

The Problem with Manufacturer’s Current Data Systems

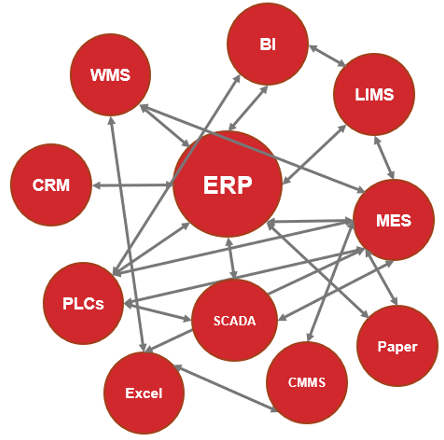

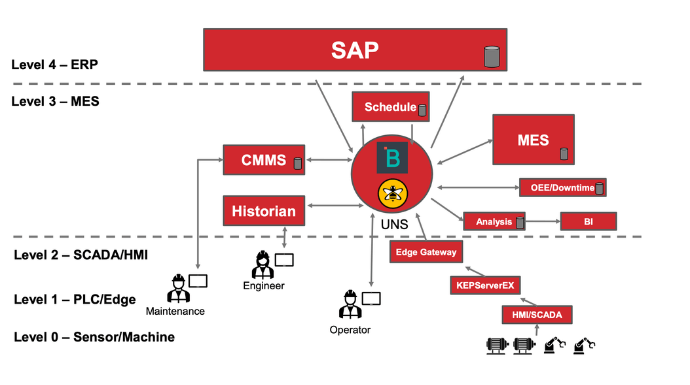

Many, many manufacturers we visit and work with (including plants I saw last week) have a multitude of discrete connections between various equipment (device or telemetry transport data) and other software systems (e.g., ERP, CMMS, etc.; transactional systems).

This architecture is very complicated and expensive to maintain, and not scalable. It often also doesn’t connect all the systems in the company because it’s so complicated and expensive.

So as a result, there are many siloed systems that require expensive and time-consuming implementations that otherwise could provide extraordinary value for data analysis if they were connected to other systems systematically.

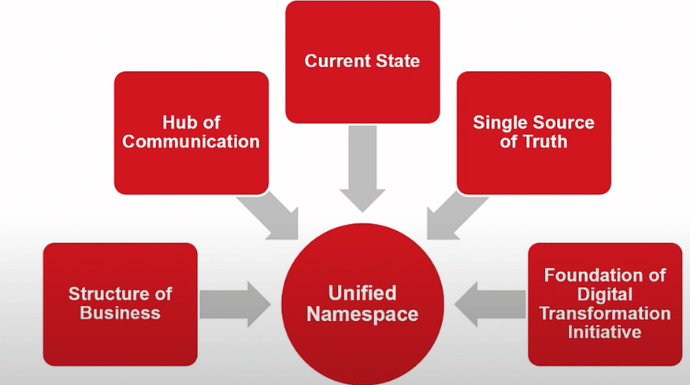

Why the Unified Namespace Should be Your Centralized Data Repository

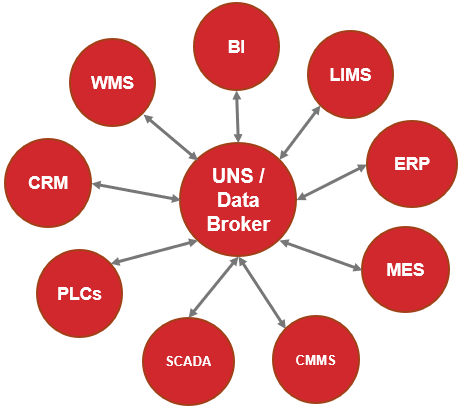

There is a better way, and it doesn’t require you to purchase any incredibly expensive, proprietary, new industrial IoT solutions or another similar software solution. What you do need is to apply these two concepts.

- Use a hierarchical structure for the data, and

- Connect all systems to a central hub to promote data interoperability.

The idea is to connect all systems to a central data hub (not a data warehouse). All systems then must connect with one and only one system, a truly centralized repository with closed-loop communication.

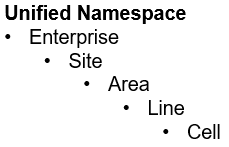

Then as systems collect data you set up each system to send (publish) it by other systems to the central hub and pull (subscribe) data it needs from the same central hub. Additionally, the data coming from all applications should be organized into a predefined hierarchy that lives in that central data hub, as follows:

Challenges

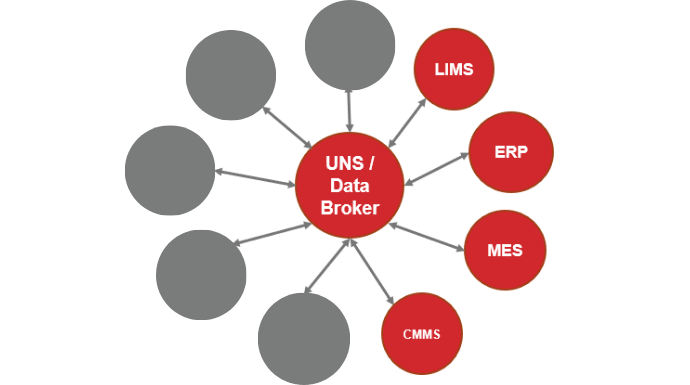

Companies have been quick to adopt this central repository open architecture for their devices or equipment (i.e., telemetry data). Others sometimes need a little help defining their unified namespace and that’s ok.

The more significant struggle is how to build connections with the non-telemetry data systems into the UNS and the MQTT (Message Queuing Telemetry Transport) Data Broker, specifically, systems such as an ERP, CMMS, MES, LIMS, etc.

Each of these systems, although they don’t typically come in contact with much production data, is a critically important element to your business. All systems need to be able to collect information, feed information, and retrieve information.

When we talk about Industry 4.0 and digital transformation, we’re promoting the idea of solving business challenges and improving business processes using real-time data across the entire enterprise. Therefore, it’s essential to connect all of these systems into a Unified namespace, your sole centralized data repository.

Two challenges:

Where does the transactional data go within the UNS?

For example, do the LIMS lab results belong under the Line or Cell level where the samples were taken, and where does the system display production data to the operator on the plant floor and enable them to react? What about MES data…is that Site, Area, or Line?

How do we technically connect to the transactional systems which don’t operate under the same technical requirements we have?

The 1st challenge is mostly a contextual question which we’ll cover in a video soon. For now, let’s discuss the 2nd challenge.

When defining a potential architecture to use we’ve typically established minimum technical requirements such as:

- Edge driven,

- Report by exception,

- Lightweight, and

- Open technology

More information about these 4 technology requirements is available on our YouTube channel and blog.

This means that when integrating into the UNS and MQTT Broker the ERP system, as an example, needs to

- Publish its data to the data broker on its own, without the data being requested by some other system (i.e., poll-response);

- Publish the data only when it has changed;

- Publish the data in a non-verbose communication protocol;

- Enable connections to its system for sharing structured data into the Industrial IoT Solutions ecosystem with virtually no restrictions (though assuming cyber security protocols are in place).

It’s no sweat for equipment to give us data via MQTT SpB even if it doesn’t natively. We add a “translating device” in between the equipment and the data broker, like a RedLion device, or a software tool from Software Toolbox or others to convert OPC UA or Modbus TCP to MQTT SpB. Essentially turning these devices into MQTT devices.

But for an ERP system or CMMS where you can connect to the database or via API, what do you do?

There are at least 3 options to address this challenge. I’ll explain each briefly.

Wait

We could hope and expect, and wait for the software companies with relational databases and APIs to change their systems to push out data via report by exception, edge-driven principles, and even better to speak MQTT SpB.

That’s a ton of work for those companies, or for the manufacturer to mimic that capability (e.g., add database triggers in the database tables to create events when certain data changes, and publish data needed out via a system that translates the data to MQTT SpB). Though I’ll probably grow old and die before that happens 😊

MQTT SpB

We could add a converter or translator of some type to take the data via the API or from the relational database, then build in the poll-response logic on one side, and the edge-driven, report by exception capabilities on the other side.

There are some tools that do this, like HighByte, a great tool, and a great company. But is MQTT SpB the right communication protocol for this data? Possibly not (more discussion to come in the 4.0 Solutions Part 2 podcast referenced at the bottom of this post).

Different UNS Space

Another option is to change where the UNS lives, maybe not in a data broker, but rather in a system that can organize the data in the UNS format and more easily interact with the relational databases or APIs.

Knowledge graph databases with GraphQL “APIs” have been discussed. However, this is changing where the UNS lives more than it is improving how the nodes in the ecosystem like an ERP will interact with the UNS.

Which is the Right Answer for All The Data?

Sadly I can’t give you a definitive answer…it’s one of those “it depends” kinds of answers. It depends on 2 conditions:

- What systems are in your IIoT ecosystem that you need to integrate with; and

- What technologies and capabilities you have to create and execute your architecture.

So far we’ve had the most success using an MQTT SpB data broker for the telemetry data and HighByte for the transactional data, and having the UNS live within that space. We’re also constantly looking for better answers. When we find them, we’ll let you know.

If you want to dig into this more on the unified namespace and digital transformation, you might enjoy listening to a 4.0 Solutions podcast I’m on with some great colleagues – Using MQTT and UNS architectures at Enterprise level Part 1 – YouTube.